sklearn.decomposition.KernelPCA¶

- class sklearn.decomposition.KernelPCA(n_components=None, kernel='linear', gamma=None, degree=3, coef0=1, kernel_params=None, alpha=1.0, fit_inverse_transform=False, eigen_solver='auto', tol=0, max_iter=None, remove_zero_eig=False, random_state=None)[source]¶

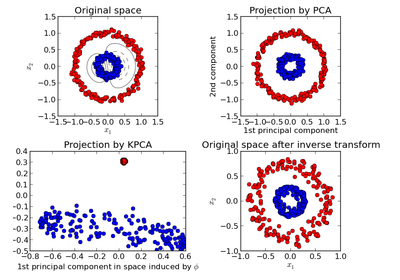

Kernel Principal component analysis (KPCA)

Non-linear dimensionality reduction through the use of kernels (see Pairwise metrics, Affinities and Kernels).

Read more in the User Guide.

Parameters: n_components: int or None :

Number of components. If None, all non-zero components are kept.

kernel: “linear” | “poly” | “rbf” | “sigmoid” | “cosine” | “precomputed” :

Kernel. Default: “linear”

degree : int, default=3

Degree for poly kernels. Ignored by other kernels.

gamma : float, optional

Kernel coefficient for rbf and poly kernels. Default: 1/n_features. Ignored by other kernels.

coef0 : float, optional

Independent term in poly and sigmoid kernels. Ignored by other kernels.

kernel_params : mapping of string to any, optional

Parameters (keyword arguments) and values for kernel passed as callable object. Ignored by other kernels.

alpha: int :

Hyperparameter of the ridge regression that learns the inverse transform (when fit_inverse_transform=True). Default: 1.0

fit_inverse_transform: bool :

Learn the inverse transform for non-precomputed kernels. (i.e. learn to find the pre-image of a point) Default: False

eigen_solver: string [‘auto’|’dense’|’arpack’] :

Select eigensolver to use. If n_components is much less than the number of training samples, arpack may be more efficient than the dense eigensolver.

tol: float :

convergence tolerance for arpack. Default: 0 (optimal value will be chosen by arpack)

max_iter : int

maximum number of iterations for arpack Default: None (optimal value will be chosen by arpack)

remove_zero_eig : boolean, default=False

If True, then all components with zero eigenvalues are removed, so that the number of components in the output may be < n_components (and sometimes even zero due to numerical instability). When n_components is None, this parameter is ignored and components with zero eigenvalues are removed regardless.

random_state : int seed, RandomState instance, or None, default

A pseudo random number generator used for the initialization of the residuals when eigen_solver == ‘arpack’.

Attributes: lambdas_ : :

Eigenvalues of the centered kernel matrix

alphas_ : :

Eigenvectors of the centered kernel matrix

dual_coef_ : :

Inverse transform matrix

X_transformed_fit_ : :

Projection of the fitted data on the kernel principal components

References

- Kernel PCA was introduced in:

- Bernhard Schoelkopf, Alexander J. Smola, and Klaus-Robert Mueller. 1999. Kernel principal component analysis. In Advances in kernel methods, MIT Press, Cambridge, MA, USA 327-352.

Methods

fit(X[, y]) Fit the model from data in X. fit_transform(X[, y]) Fit the model from data in X and transform X. get_params([deep]) Get parameters for this estimator. inverse_transform(X) Transform X back to original space. set_params(**params) Set the parameters of this estimator. transform(X) Transform X. - __init__(n_components=None, kernel='linear', gamma=None, degree=3, coef0=1, kernel_params=None, alpha=1.0, fit_inverse_transform=False, eigen_solver='auto', tol=0, max_iter=None, remove_zero_eig=False, random_state=None)[source]¶

- fit(X, y=None)[source]¶

Fit the model from data in X.

Parameters: X: array-like, shape (n_samples, n_features) :

Training vector, where n_samples in the number of samples and n_features is the number of features.

Returns: self : object

Returns the instance itself.

- fit_transform(X, y=None, **params)[source]¶

Fit the model from data in X and transform X.

Parameters: X: array-like, shape (n_samples, n_features) :

Training vector, where n_samples in the number of samples and n_features is the number of features.

Returns: X_new: array-like, shape (n_samples, n_components) :

- get_params(deep=True)[source]¶

Get parameters for this estimator.

Parameters: deep: boolean, optional :

If True, will return the parameters for this estimator and contained subobjects that are estimators.

Returns: params : mapping of string to any

Parameter names mapped to their values.

- inverse_transform(X)[source]¶

Transform X back to original space.

Parameters: X: array-like, shape (n_samples, n_components) : Returns: X_new: array-like, shape (n_samples, n_features) : References

“Learning to Find Pre-Images”, G BakIr et al, 2004.

- set_params(**params)[source]¶

Set the parameters of this estimator.

The method works on simple estimators as well as on nested objects (such as pipelines). The former have parameters of the form <component>__<parameter> so that it’s possible to update each component of a nested object.

Returns: self :