sklearn.ensemble.IsolationForest¶

- class sklearn.ensemble.IsolationForest(n_estimators=100, max_samples='auto', max_features=1.0, bootstrap=False, n_jobs=1, random_state=None, verbose=0)[source]¶

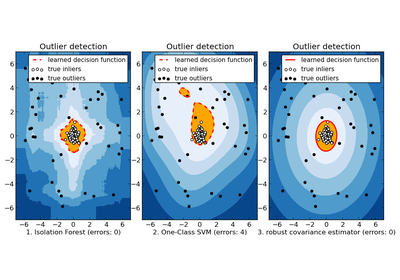

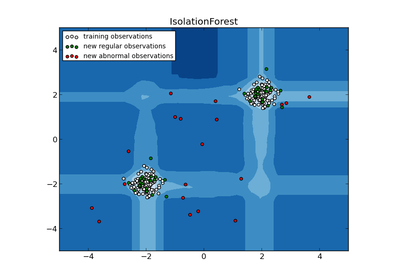

Isolation Forest Algorithm

Return the anomaly score of each sample using the IsolationForest algorithm

The IsolationForest ‘isolates’ observations by randomly selecting a feature and then randomly selecting a split value between the maximum and minimum values of the selected feature.

Since recursive partitioning can be represented by a tree structure, the number of splittings required to isolate a sample is equivalent to the path length from the root node to the terminating node.

This path length, averaged over a forest of such random trees, is a measure of abnormality and our decision function.

Random partitioning produces noticeably shorter paths for anomalies. Hence, when a forest of random trees collectively produce shorter path lengths for particular samples, they are highly likely to be anomalies.

Parameters: n_estimators : int, optional (default=100)

The number of base estimators in the ensemble.

max_samples : int or float, optional (default=”auto”)

- The number of samples to draw from X to train each base estimator.

- If int, then draw max_samples samples.

- If float, then draw max_samples * X.shape[0] samples.

- If “auto”, then max_samples=min(256, n_samples).

If max_samples is larger than the number of samples provided, all samples will be used for all trees (no sampling).

max_features : int or float, optional (default=1.0)

- The number of features to draw from X to train each base estimator.

- If int, then draw max_features features.

- If float, then draw max_features * X.shape[1] features.

bootstrap : boolean, optional (default=False)

Whether samples are drawn with replacement.

n_jobs : integer, optional (default=1)

The number of jobs to run in parallel for both fit and predict. If -1, then the number of jobs is set to the number of cores.

random_state : int, RandomState instance or None, optional (default=None)

If int, random_state is the seed used by the random number generator; If RandomState instance, random_state is the random number generator; If None, the random number generator is the RandomState instance used by np.random.

verbose : int, optional (default=0)

Controls the verbosity of the tree building process.

Attributes: estimators_ : list of DecisionTreeClassifier

The collection of fitted sub-estimators.

estimators_samples_ : list of arrays

The subset of drawn samples (i.e., the in-bag samples) for each base estimator.

max_samples_ : integer

The actual number of samples

References

[R141] Liu, Fei Tony, Ting, Kai Ming and Zhou, Zhi-Hua. “Isolation forest.” Data Mining, 2008. ICDM‘08. Eighth IEEE International Conference on. [R142] Liu, Fei Tony, Ting, Kai Ming and Zhou, Zhi-Hua. “Isolation-based anomaly detection.” ACM Transactions on Knowledge Discovery from Data (TKDD) 6.1 (2012): 3. Methods

decision_function(X) Average of the decision functions of the base classifiers. fit(X[, y, sample_weight]) Fit estimator. get_params([deep]) Get parameters for this estimator. predict(X) Predict anomaly score of X with the IsolationForest algorithm. set_params(**params) Set the parameters of this estimator. - __init__(n_estimators=100, max_samples='auto', max_features=1.0, bootstrap=False, n_jobs=1, random_state=None, verbose=0)[source]¶

- decision_function(X)[source]¶

Average of the decision functions of the base classifiers.

Parameters: X : {array-like, sparse matrix}, shape (n_samples, n_features)

The training input samples. Sparse matrices are accepted only if they are supported by the base estimator.

Returns: score : array, shape (n_samples,)

The decision function of the input samples.

- fit(X, y=None, sample_weight=None)[source]¶

Fit estimator.

Parameters: X : array-like or sparse matrix, shape (n_samples, n_features)

The input samples. Use dtype=np.float32 for maximum efficiency. Sparse matrices are also supported, use sparse csc_matrix for maximum efficieny.

Returns: self : object

Returns self.

- get_params(deep=True)[source]¶

Get parameters for this estimator.

Parameters: deep: boolean, optional :

If True, will return the parameters for this estimator and contained subobjects that are estimators.

Returns: params : mapping of string to any

Parameter names mapped to their values.

- predict(X)[source]¶

Predict anomaly score of X with the IsolationForest algorithm.

The anomaly score of an input sample is computed as the mean anomaly score of the trees in the forest.

The measure of normality of an observation given a tree is the depth of the leaf containing this observation, which is equivalent to the number of splittings required to isolate this point. In case of several observations n_left in the leaf, the average path length of a n_left samples isolation tree is added.

Parameters: X : array-like or sparse matrix of shape (n_samples, n_features)

The input samples. Internally, it will be converted to dtype=np.float32 and if a sparse matrix is provided to a sparse csr_matrix.

Returns: scores : array of shape (n_samples,)

The anomaly score of the input samples. The lower, the more normal.

- set_params(**params)[source]¶

Set the parameters of this estimator.

The method works on simple estimators as well as on nested objects (such as pipelines). The former have parameters of the form <component>__<parameter> so that it’s possible to update each component of a nested object.

Returns: self :